The Algorithm Is Not Enough!

A landmark trial just tested an AI stethoscope across 205 NHS practices and 1.5 million patients. The technology worked. The outcomes didn't move. That gap is the most important story in health AI tod

Imagine you hand the world’s most gifted diagnostician a stethoscope, give them a room, and then never actually ask them to see any patients. The stethoscope works. The diagnostician is brilliant. Nothing changes. This is, in essence, what the TRICORDER trial just taught us about AI in healthcare — and the lesson could not be more timely, or more uncomfortable.

Published in The Lancet earlier this year, TRICORDER was the first pragmatic, cluster-randomised controlled trial of a clinical AI technology deployed at scale across a national health system. Researchers embedded an AI-enabled stethoscope — capable of detecting heart failure, atrial fibrillation, and valvular heart disease from a single 15-second recording — into 96 NHS primary care practices serving over 700,000 patients. The algorithms had regulatory approval. The science was sound. The unmet need was enormous.

And yet: after 12 months, the intention-to-treat analysis found no significant difference in detection rates between practices with the AI stethoscope and those without it.

Let that sit for a moment. The AI worked. The clinicians were trained. The devices were free. The study ran for a full year. And still — at a population level — the needle barely moved.

When Good Technology Meets Real Life

We in the health innovation space have a tendency to speak in the language of breakthroughs. Algorithm achieves cardiologist-level accuracy. AI detects cancer earlier than ever before. Model outperforms specialists in reading scans. The press releases write themselves. And to be fair, many of these claims are technically true.

But TRICORDER peels back the curtain on what comes next: the long, messy, deeply human process of getting a technology to actually function inside a living health system. And what the investigators found is a story we keep refusing to take seriously.

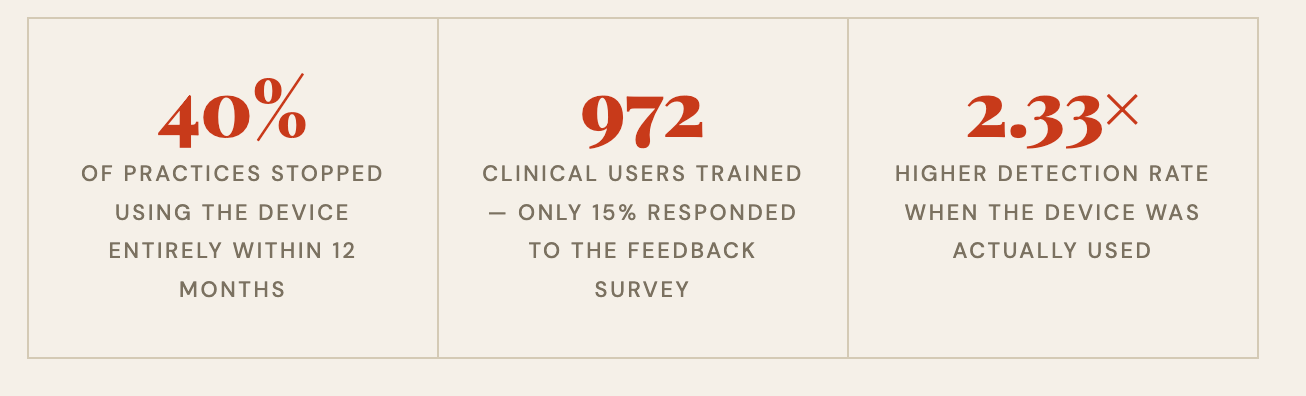

Among the 972 clinical staff trained to use the device, adoption collapsed. Nearly 40% of practices were no longer using the technology by month 12. Usage was heavily skewed — the top five practices accounted for 34% of all recordings, with one outlier practice alone responsible for nearly a fifth of all use.

The single most-cited barrier? The technology didn’t fit into how clinicians already worked. There was no integration with electronic health records. Clinicians had to manually enter results. Capturing a clean waveform signal was sometimes frustrating. When asked to rank what would most improve uptake, clinicians placed EHR integration above financial incentives. They didn’t want to be paid more to use it. They wanted it to stop getting in their way.

The Per-Protocol Signal Is the Real Story

Here is where the TRICORDER data becomes genuinely exciting — and genuinely instructive.

When researchers looked only at patients who were actually examined with the AI stethoscope and compared them to propensity-matched controls, the results were striking. Heart failure detection was 2.33 times higher. Atrial fibrillation detection was 3.45 times higher. Valvular heart disease detection nearly doubled. Time to diagnosis was shorter across all three conditions.

This is not a story about AI falling short. This is a story about what AI can deliver when it is used as intended — and a blunt reckoning with how rarely that actually happens in practice.

We’ve Been Asking the Wrong Question

For the past decade, the central question in health AI has been: does the algorithm work? Sensitivity. Specificity. AUC. Model performance on validation datasets. These are necessary questions. They are not sufficient ones.

The question that TRICORDER forces us to confront is different: Under what conditions will this technology actually be used, consistently, by real clinicians, in real settings, with real patients?

That is an implementation question. It is a behavioural question. It is an organisational design question. And it is a question the health AI field has been chronically under-resourced and underincentivised to answer.

Compare TRICORDER with the EAGLE trial in the United States, which tested an AI algorithm for detecting low ejection fraction embedded directly into digital ECG reports, triggering an automatic EHR alert recommending echocardiography. Near-complete uptake. Increased detection. The contrast with TRICORDER is not a story of better or worse technology — it is a story of integration. In EAGLE, the AI lived inside the workflow. In TRICORDER, it sat beside it.

Implementation Is Not an Afterthought

I have spent considerable time thinking about why health systems struggle to adopt beneficial innovations. And what TRICORDER crystallises for me is this: we consistently treat implementation as something that happens after the science, when it must be treated as an integral part of the science itself.

We fund algorithm development. We fund validation studies. We fund regulatory pathways. We almost never fund the messy, unglamorous work of understanding how a busy GP, seeing 30 patients a day, under administrative pressure, with a decade-old EHR that crashes on Tuesdays, will actually incorporate a new tool into their practice. And then we express surprise when they don’t.

The TRICORDER investigators deserve enormous credit for designing a trial that takes this seriously from the outset. This was not simply a clinical effectiveness study. It was deliberately designed as a randomised controlled implementation trial — a study that simultaneously evaluated both the clinical effect and the contextual factors shaping adoption. That design choice is itself a contribution to the field.

The result is a dataset that will be immensely valuable not just for understanding AI stethoscopes, but for understanding how to deploy clinical AI in any resource-constrained, real-world health system. The barriers they identified — workflow friction, lack of EHR integration, absence of financial incentives aligned with use — are not specific to this device. They are the universal antagonists of health innovation.

What Needs to Change

We need to stop measuring AI success at the moment of regulatory approval and start measuring it at the moment of sustained clinical use. These are very different milestones, and conflating them has cost us years of potential benefit.

We need to design AI tools for the workflows they will enter, not for the workflows we wish existed. That means speaking to clinicians before the product is built, not after. It means EHR integration as a minimum viable feature, not a roadmap item. It means understanding that a 15-second interaction that adds two minutes of friction is not a 15-second interaction — it is a tax on already depleted clinical attention.

We need commissioners and health system leaders to ask harder questions when evaluating AI tools. Not just “does it work in a trial?” but “what did adoption look like? What dropped off? Who used it and who didn’t? What changed when they did?” TRICORDER gives us a blueprint for how to generate that evidence rigorously.

And we need funders to recognise that implementation science is not soft science. Understanding why an intervention fails to change behaviour in complex adaptive systems is as technically demanding — and as consequential — as building the algorithm itself.

The Stethoscope Still Works

I want to be clear about what TRICORDER did not find. It did not find that AI stethoscopes are ineffective. It did not find that the algorithms were poor. It did not find that clinicians were hostile or patients resistant.

It found that when the device was actually used, it detected significantly more disease. Earlier. In patients who would otherwise have waited for a crisis. That is a genuine clinical signal, and it matters enormously given that over 70% of heart failure in the UK is still diagnosed after an unplanned hospitalisation, despite half of those patients having had symptomatic presentations to primary care beforehand.

The technology works. The potential is real. The gap between potential and reality is almost entirely an implementation problem. And implementation problems are solvable — if we take them as seriously as we take the algorithms.

TRICORDER is a gift to the field, precisely because it was honest about failure. It shows us exactly where the gap is. Now we need the will, the funding, and the intellectual humility to close it.

The algorithm is not enough. It never was. The future of health AI will be won or lost not in the lab, but in the workflow.

Rubin Pillay Physician-scientist and innovation strategist working at the intersection of health systems, emerging technology, and human behaviour. Interested in why good ideas so often fail to become good outcomes — and what it takes to close that gap.

Really important point — as this example shows, a great algorithm on its own doesn’t guarantee better outcomes if it doesn’t integrate with the workflow, clinician needs the broader system. That’s why we see human factors and systems thinking as central to effective health innovation. At QuILL there is a focus on evaluating technologies in realistic clinical environments and with real end-users, because it’s only by understanding the sociotechnical context that we can translate innovation into real impact.

Good article - thank you for sharing.

Brilliant narrative Rubin. Thank you for recognising what so many of our profession wilfully ignore or just have a blind spot. When will we learn?...just how much effort and resource ends up in the graveyard of worthy developments that failed. So called Innovators have failed if they don't impact patient care...too many sad and misguided careers driven by the goal of publishing papers